We are surrounded!

By data.

Whether simple or complex, we are presented with data every day in a range of formats that we interpret through one or more of our five senses. Some data is simple and can be interpreted clearly and concisely with little work, like a dark shape on a light background. Other data is complex; think of a cooking spice.

If you see chopped green leaves, your brain reduces the range of possible spices to the ones

Photo by Kevin Doran on Unsplash

that are green. If you can smell it, and if your brain has registered that scent with the name, you might recognize it correctly. Finally, if you can see the name spelled out – more visual information – you can be nearly certain what those chopped green leaves are.

Just as written data has transformed from cave drawings to moveable type to digital text over millennia, other visual data has transformed significantly in a relatively short time.

Do you still own a camera that uses film? Probably not. If you use a smartphone, you have at least one camera in that device, and it likely takes very good photos.

Over the past several decades, digital cameras have improved significantly; higher image resolution and smaller in size. However, despite the technical advances of digital photography, still images are, well, still; they remain a two-dimensional (2D) representation of a scene. Additional context – additional data – can be recorded using “burst” photos or video. Yet even with that extra information, the visualization is still 2D, and if you want more information from a scene, you must return to the location and take more photos or video; you must collect more data.

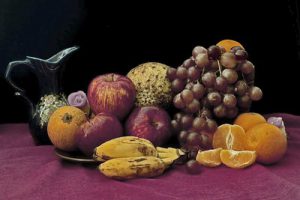

“Still Life – Fruits” by Hafiz Issadeen is licensed under CC BY 2.0

Of course, if the subject of interest is easily accessible, such as a bowl of fruit, you can return to that place and get more photos. You can interrogate the subject – move around it and photograph different views that show information that was not visible in the original photo. You can collect and see more data.

As devices and software have grown more capable, three-dimensional (3D) data has become more common in entertainment, product design, training, gaming and healthcare. Free and low-cost tools allow nearly anyone to create 3D content for a range of consumers on many different devices. The challenge is that even after you create amazing content, it is often difficult and time-consuming to get that content to the devices that your audience wants to use.

In one example that everyone can relate to, the movie industry has transformed entertainment delivery from theater screen to DVD rentals to online streaming. Other industries are also transforming how their content is consumed. For example, both Virtual Reality (VR) and Augmented Reality (AR) are becoming more common for certain types of training and technical simulation. Service technicians can more easily repair complex products, students can learn in a visually rich immersive lesson, and physicians can see and utilize 3D models for a range of use cases. In each of those examples, the user is able to interrogate the subject – to collect more data – whenever and wherever they may be.

When you cannot be in the same place as the subject, or cannot meet at the same time and place as an instructor or colleague, the portability of 3D data improves communication and collaboration like never before!

MedReality has developed a way to simplify sharing 3D models to a range of viewing devices.

If you have 3D models that you would like to view in AR or VR, our platform can help you get those models onto a smartphone or headset in minutes, and to share them with colleagues across town or around the world. With our free trial you will be amazed when you see your own models in rich detail on an iPhone, HoloLens 2, or Oculus Quest.

With MedReality, advanced visualization is as easy as 1, 2, 3D!

//